Color Point Cloud: See Your Dataset with Greater Clarity

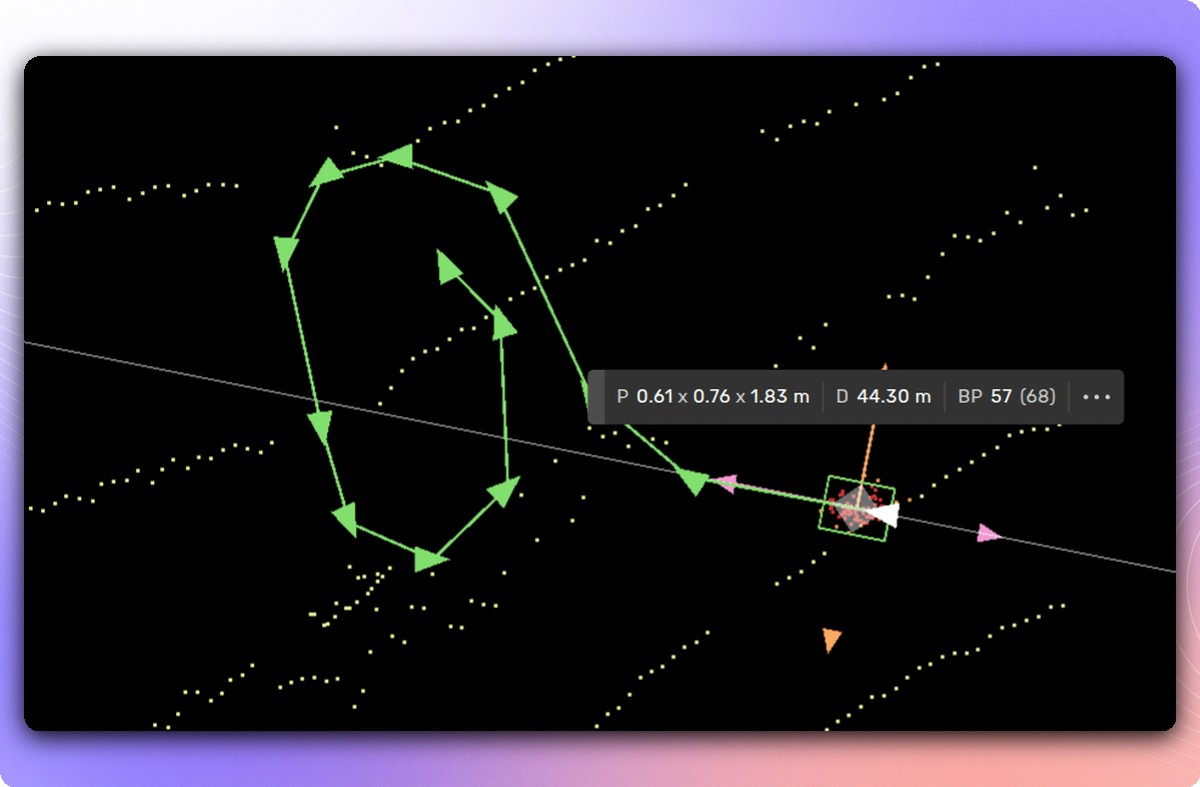

e're excited to introduce Color Point Cloud—a new capability that transforms how you work with sensor-fusion data. This feature projects camera images directly onto your point cloud in full color, leveraging sensor calibrations and ego vehicle data to give you a richer, more intuitive view of 3D scenes.

This update reflects our commitment to helping teams get the most annotated data for their budget by making human feedback faster and more effective:

- Enhanced scene understanding: Color Point Cloud fuses 2D and 3D information, making it easier for annotators to recognize objects and understand spatial context—critical for multi-modal autonomy data.

- Faster calibration validation: Quickly identify time-sync and calibration issues that could compromise data quality, reducing rework and accelerating delivery.

How it works: Select any camera feed, and the platform instantly colors the point cloud using live computation from that 2D image. Switch cameras, and the view updates on the fly—no preprocessing required.

Our platform can color millions of points in real time across aggregated views, dramatically sharpening visual detail. This matters when working with complex sensor-fusion data: clearer images mean faster, more accurate human feedback, which directly translates to better training data and lower costs.

Real-world impact: Lane markings in rainy conditions often lose reflectivity in lidar-only views. With Color Point Cloud enabled, annotators can discern lane boundaries more clearly by referencing camera color information—improving annotation quality where it matters most. Our engineering team optimized this to work seamlessly across up to nine camera feeds simultaneously, delivering real-time performance at scale.

Color Point Cloud is available now as part of the Kognic platform

Share this

Written by