What Is Autonomous Driving Annotation?

Autonomous driving annotation is the process of labeling sensor data collected by self-driving vehicles so that machine learning models can learn to perceive and understand their environment. This includes marking objects in camera images, LiDAR point clouds, and radar returns with classifications, bounding boxes, semantic labels, and behavioral descriptions.

Modern autonomous driving programs require millions of annotated frames across diverse scenarios, weather conditions, and edge cases. As the industry shifts from perception-only models to end-to-end architectures, annotation is expanding from "what is in the scene" to "why things happen," requiring new approaches like Language Grounding and Chain of Causation workflows.

Get the most annotated data for your budget

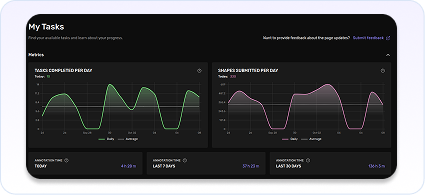

Every annotation counts. We ensure maximum throughput and quality, giving you the most data for your budget, without compromising on safety, trust, or productivity.

The data your models actually need

Unlock the potential of your models with our annotation solutions and overpowered platform, enabling human feedback at scale.

Active Learning Labeling for Smarter Models

Why active learning matters

Stop annotating data your model already understands. Kognic's active learning capabilities identify the samples that matter most—edge cases, uncertainties, and novel scenarios—so every annotation dollar drives real model improvement.

Continental Case Study

Zenseact case Study

Why Choose Kognic?

Frequently Asked Questions

Kognic supports a comprehensive range of annotation types across both 3D and 2D data. In 3D, this includes cuboids, polylines, polylanes, semantic segmentation, points, and curves. In 2D, the platform handles bounding boxes, polygons, points, curves, and text annotations. These annotation types cover the full spectrum of autonomous driving perception tasks, from object detection and tracking to lane marking labeling and scene segmentation.

The platform supports a wide range of use cases critical to autonomous driving development. These include lane and road marking annotation, dynamic and static object labeling, instance segmentation, freespace detection, traffic sign classification, parking detection, interior sensing, driver monitoring, camera blockage detection, light source identification, and scene classification. Each use case comes with proven workflows and quality processes refined across production programs with leading customers.

Both. Kognic offers a flexible operations model that adapts to how your organization works. You can run annotation projects with your own internal teams using the Kognic platform, work with Kognic's managed annotation services for full-service delivery, or use a hybrid model with your preferred BPO partner operating on the platform. This flexibility means you control the tradeoff between cost, speed, and oversight based on your program's needs.

For input, the platform accepts common image formats (PNG, JPG, JPEG, WEBP, AVIF) and 3D point cloud formats (PCD, LAS, CSV). For output, Kognic supports OpenLABEL — the emerging industry standard for annotation data — as well as custom export converters tailored to your specific training pipeline requirements. This ensures labeled data integrates smoothly into your ML infrastructure without manual conversion steps.

Kognic's automation features can save up to 68% of annotation time compared to manual labeling. The platform integrates pre-labels from your own models or third-party sources, giving annotators a starting point to review and refine rather than creating labels from scratch. Additional automation includes multi-sensor projections, ego motion compensation, SAM-based segmentation, object tracking, automatic box adjustment, and static object filtering. These tools work together to let human annotators focus their effort where it matters most.

Active learning is a strategy that focuses your annotation budget on the data samples that will improve your model the most. Instead of labeling data randomly, the platform helps you identify high-value samples — scenes where your model is uncertain, encounters rare objects, or makes errors. By prioritizing these samples for annotation, you get better model performance with less labeled data. This targeted approach reduces overall annotation costs while accelerating model improvement.

Getting started typically begins with a demo and technical evaluation. You can request a demo to see the platform in action with your specific sensor configuration and annotation requirements. From there, Kognic's team works with you to define annotation guidelines, set up your project, and run a pilot on a representative sample of your data. Most teams go from first conversation to active annotation within a few weeks.