Pre-Production Validation Challenges in Autonomy Systems

Pre-production autonomy systems face a challenge: ensuring that validation datasets accurately represent the real-world scenarios the system will encounter. Unlike traditional automotive systems, autonomous vehicles must handle an "open set" problem—they need to work everywhere, not just in controlled environments. For consumer autonomous driving systems like those in the Volvo EX90, the error tolerance is near zero.

The complexity of validation increases exponentially with system requirements. Autonomous systems combine LiDAR, radar, and camera data, and any misalignment due to calibration errors, sensor vibration, or timestamp issues can result in flawed annotations. These errors cascade through the development pipeline, potentially training models on incorrect "ground truth" and compromising safety.

Pre-production validation is the process of verifying that an autonomous driving system's training data and perception models meet safety, accuracy, and regulatory standards before the system is deployed to production vehicles. It requires human-verified ground truth data with full traceability, covering sensor calibration, edge case coverage, and compliance with standards like UNECE WP.29 and ISO 26262.

Key Takeaways

- Modern autonomous fleets generate up to 4TB of raw sensor data per vehicle per day, but most recordings remain unlabeled. The scarce resource is clean, verifiable ground truth covering rare edge cases and cross-sensor context.

- UNECE WP.29 regulations require an auditable chain of human-verified ground truth for autonomous vehicle approval. Without traceable datasets, ML models cannot be legally certified for deployment.

- Real-time checker apps that catch physically impossible annotation errors during labeling (not after) are critical for safety-grade data quality, aligning with ISO 26262 safety standards.

- Many perceived model errors are actually definition errors. Aligning annotation guidelines with safety requirements before labeling begins prevents systematic data quality problems downstream.

- The annotation market for autonomous driving is projected to exceed $7 billion by 2030, driven by regulatory requirements and the shift toward foundation models that demand even higher-quality validation data.

How Much Data Do Autonomous Vehicles Generate, and Why Does That Matter?

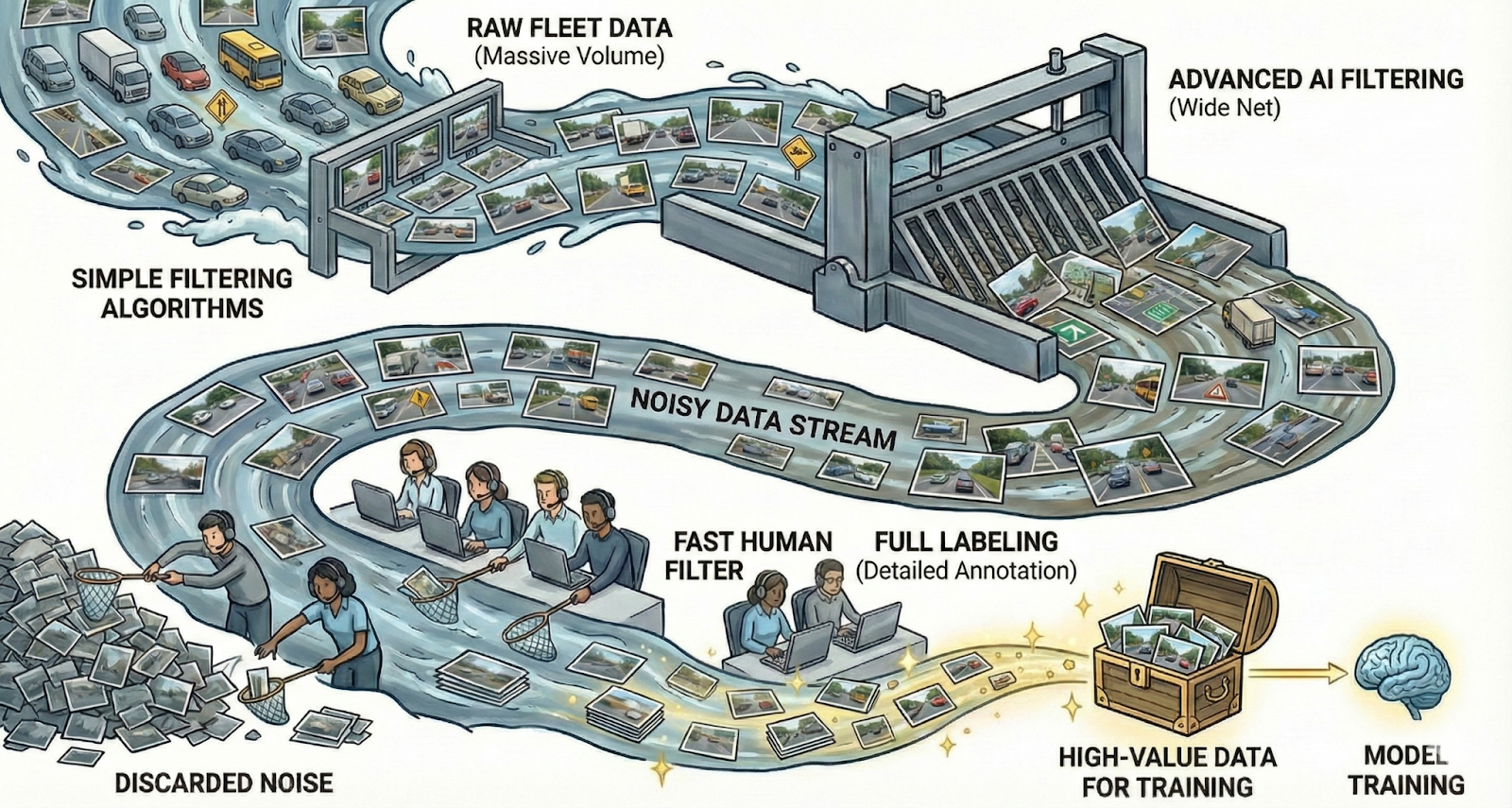

Modern autonomous fleets generate terabytes of sensor data per vehicle per day. By 2030, over 70 million vehicles per year will have operational self-driving stacks (L2 and beyond), with L2+ cars producing up to 4TB of raw data per vehicle per day. The annotation market is expected to exceed $7B by 2030, potentially reaching $21B in optimistic scenarios.

However, volume alone doesn't solve the problem. Most raw recordings are unlabeled, redundant, and lack the context needed to understand cause and intent. What's truly scarce are clean, verifiable signals—rare edge cases, cross-sensor context, and annotations that explain not just what happened, but why.

What Regulations Govern Autonomous Vehicle Validation?

UNECE WP.29: Regulatory Approval Framework

UNECE WP.29 regulations require an auditable chain of human-verified ground truth for autonomous vehicle approval. This means that every release must be validated against traceable, human-verified data, with documented evidence showing:

- Who confirmed specific driving scenarios (e.g., a rare cut-in maneuver)

- Why a disengagement was labeled as a "system bug" versus driver error

- What objective criteria were met before the model was promoted to the fleet

Without this curated, signed-off dataset, the latent features inside machine learning models remain legally uninterpretable and inadmissible for regulatory certification. Human review becomes the bridge between opaque neural networks and the explicit artifacts—scenario coverage reports, compliance checklists, safety case arguments—that certification bodies require.

EU AI Act and Emerging Standards

The EU AI Act further reinforces the requirement for human oversight in safety-critical AI systems. As regulation increases, demand for human-verified ground truth continues to grow, making the quality and traceability of annotation workflows increasingly important for market access.

How Does Kognic Ensure Compliance-Grade Ground Truth?

Safety-Critical "Checker" Architecture

Kognic's platform implements real-time "checker apps" that prevent physically impossible errors from entering the dataset at the source. This "prevention over correction" philosophy aligns directly with ISO 26262 standards for safety monitoring:

- Overlapping Vehicle Detection: The system flags errors if two passenger car cuboids overlap in 3D space—a physical impossibility that would confuse motion planning algorithms

- Biometric Validation: Warnings trigger if pedestrian annotations exceed realistic dimensions (e.g., taller than 2.5 meters), preventing "ghost" objects or noise from being labeled as humans

- Relationship Verification: Complex logic ensures that if a pedestrian is labeled as "riding a bike," the bicycle object is linked to the pedestrian object throughout the temporal sequence

- Plausibility checks: flagging unrealistic accelerations or speeds that prompt annotators to take a second look.

These checks are not QA scripts run after the fact—they are guardrails built into the annotation tool, preventing errors from entering the dataset during the labeling process.

Multi-Layer Quality Assurance Process

Kognic's QA workflow combines automated validation, expert human review, and customer-defined quality criteria to ensure annotation quality meets safety requirements:

- Automated Validation: Real-time detection of labeling issues, calibration errors, and physically impossible configurations

- Multi-Annotator Consensus: Critical scenarios are annotated multiple times to ensure accuracy and build consensus on ambiguous cases

- Granular Feedback Systems: Issues can be flagged and tracked at the object, frame, sequence, and sub-sequence level

- Live QA Dashboards: Real-time tracking of accuracy metrics, object counts, error types, and reviewer performance

- Customizable QA Rules: Workflows can adapt to evolving quality criteria without engineering delays

Expert Workforce and Domain Knowledge

Unlike generic annotation providers, Kognic employs "Data specialists"—often with PhDs or advanced engineering backgrounds—who understand traffic rules, physics, and causal reasoning. This specialized workforce is essential for making the complex behavioral judgments required for safety-critical validation.

The platform also includes dedicated "Lead Quality Managers" (LQMs) who work directly with customer engineering teams to ensure that annotation guidelines align precisely with safety requirements. This prevents the misalignment problem where models learn from lazy or inconsistent annotations rather than true safety-critical intent.

Traceability and Audit Trail

Every annotation in Kognic's platform maintains a complete chain of custody, documenting:

- Which annotator(s) worked on each frame or sequence

- What instruction version was used

- Reviewer consensus scores and disagreement resolution

- Automated sanity check results

- Customer approval status

This comprehensive audit trail provides the documented evidence required by ISO 26262 and UNECE WP.29 for regulatory certification and accident investigation.

Automotive Standards Compliance

Kognic's platform is built with automotive industry standards at its core:

- ASAM OpenLABEL: Native compliance with the automotive industry standard for ground truth labeling

- ISO Certification: Full ISO compliance for quality management systems

- TISAX: Enterprise-grade security and compliance certifications required by Tier 1 suppliers and OEMs

- ISO27001 and SOC2-type2 certifications expected in 2026 to further enhance our cybersecurity.

- GDPR Compliance: Full adherence to European data protection requirements, including workforce location and EU infrastructure

Proven at Scale

Kognic has delivered over 100 million annotations across 7+ years of production, supporting 70+ autonomy programs with deployments across the US, Europe, China, and Japan. This battle-tested infrastructure demonstrates the ability to maintain compliance-grade quality at the massive scale required by modern autonomous vehicle development programs.

How Do Automotive Companies Solve Validation at Scale?

Zenseact (Volvo): Zero-Collision Consumer Autonomy

Zenseact, the software subsidiary of Volvo Cars, is developing unsupervised autonomous driving for consumer vehicles (specifically the Volvo EX90). The challenge: consumer autonomous driving is an "open set" problem—unlike a robotaxi in a geofenced city, a Volvo must work everywhere, with near-zero error tolerance.

Zenseact uses Kognic not just for labeling, but for validation. The collaboration revealed that many perceived "model errors" were actually "definition errors" (e.g., what exactly counts as the road edge in heavy snow?). By aligning annotation guidelines with safety requirements first, Kognic ensured data consistency, allowing Zenseact to use the data to "program" the safety constraints of the vehicle.

The "Zenseact Open Dataset" (ZOD) stands as a testament to this approach—a massive, multimodal dataset curated to expose the "long tail" of European driving scenarios required for safety validation.

Kodiak: Long-Range Perception for Autonomous Trucking

Kodiak Robotics faces a unique validation challenge: detecting vehicles at 400+ meters on highways, where a car appears as just a few pixels in camera imagery and 1-2 points in LiDAR. Standard annotation workflows routinely miss these sparse signals or label them as noise.

Kognic's high-fidelity sensor fusion and temporal aggregation capabilities allow annotators to track objects over time, confirming that distant, sparse detections are real vehicles. This capability is safety-critical: detecting a stalled car on the highway horizon is the difference between a safe lane change and a catastrophic collision at highway speeds.

What Does the Future of Autonomous Vehicle Validation Look Like?

As autonomy programs transition toward foundation models and end-to-end architectures, the role of validation data becomes even more critical. These systems demand:

- Fleet-Scale Data Curation: Petabyte-scale clip triage to surface rare hazards and edge cases

- Causal Coverage Verification: Human judgment to verify that training data covers the causal scenarios required for safe operation

- Policy-Level Feedback: Preference rewards that encode comfort, cultural norms, and legal requirements

- Regulatory Traceability: Continuous documentation that bridges opaque neural networks with explicit safety artifacts

FAQ Section

Q: What is pre-production validation in autonomous driving?

Pre-production validation is the process of confirming that an autonomous vehicle's perception models and training datasets meet safety, quality, and regulatory requirements before deployment to real vehicles. It involves verifying ground truth data accuracy, sensor calibration, edge case coverage, and traceability of human review decisions.

Q: Why is human-verified ground truth required for autonomous vehicles?

Regulators like UNECE WP.29 mandate an auditable chain of human-verified ground truth for autonomous vehicle approval. Neural networks are opaque by nature, so human review creates the explicit artifacts (scenario coverage reports, compliance checklists, safety documentation) that certification bodies require. Automated checks alone cannot satisfy current regulatory frameworks.

Q: How much data do autonomous vehicle fleets generate?

Modern autonomous fleets generate terabytes of sensor data per vehicle per day. L2+ vehicles can produce up to 4TB of raw data daily. By 2030, over 70 million vehicles annually are expected to have operational self-driving systems at L2 or above, making scalable annotation and validation infrastructure essential.

Q: What is the difference between annotation errors and definition errors?

Annotation errors occur when a labeler incorrectly identifies or marks an object. Definition errors occur when the annotation guidelines themselves are ambiguous or misaligned with the system's safety requirements. For example, how to define "road edge" during heavy snowfall is a definition problem, not an annotator mistake. Fixing definition errors upstream prevents systematic data quality issues.

Q: What standards apply to autonomous vehicle data annotation?

Key standards include UNECE WP.29 (regulatory approval framework requiring traceable human-verified data), ISO 26262 (functional safety for automotive systems), ASAM OpenLABEL (industry-standard annotation format), and the EU AI Act (requiring human oversight for safety-critical AI). Data security certifications like TISAX and ISO 27001 are also relevant for handling OEM sensor data.

Q: What are checker apps in annotation quality assurance?

Checker apps are real-time validation rules that run during the annotation process, catching physically impossible errors at the source rather than in post-processing. Examples include flagging overlapping vehicle bounding boxes in 3D space, warning when pedestrian dimensions exceed realistic limits, and detecting unrealistic accelerations. This prevents flawed data from entering training pipelines.

Kognic's platform is purpose-built to meet these evolving requirements, providing the scalable human-feedback infrastructure that enables autonomous systems to align with both human intent and regulatory requirements—at the speed and scale demanded by production autonomy programs.

Share this

Written by