Launching Language Grounding: Teaching Machines Why Things Happen

Language Grounding is Kognic's annotation capability for vision-language models (VLMs) and vision-language-action models (VLAs) in autonomous driving. It turns multi-sensor driving scenes into structured textual descriptions of what is happening, why, and what the agent should do next. Language Grounding supports three annotation modes (Write, Edit, Rank) and uses a Chain of Causation methodology that separates decision-point reasoning from full-context QA to prevent hindsight bias. Customers include Qualcomm and BMW autonomy programs.

For a decade, the autonomous driving industry focused on one question: what is in the scene?

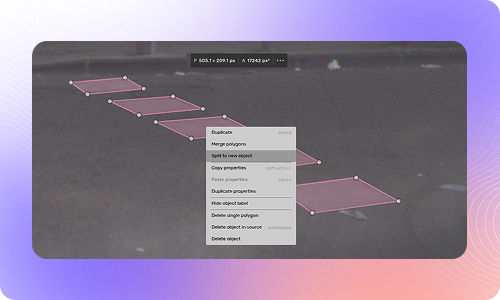

Bounding boxes. Segmentation masks. Lane lines. Point clouds. We built an entire annotation ecosystem around helping machines see. And it worked. Perception models got remarkably good at identifying objects in the world.

But seeing is not understanding.

A child on a bicycle at the edge of the road. A delivery truck double-parked, forcing oncoming traffic into your lane. A pedestrian stepping off the curb while looking at their phone. In each of these cases, the critical question is not what is there. It is why the vehicle should brake, steer, or wait.

This is the shift the industry is making right now. End-to-end driving models — architectures that map sensor inputs directly to vehicle control — need more than labeled objects. They need causal understanding. Reasoning that links what the vehicle observes to what it should do, and why.

We have been building toward this for years. Today, we are ready.

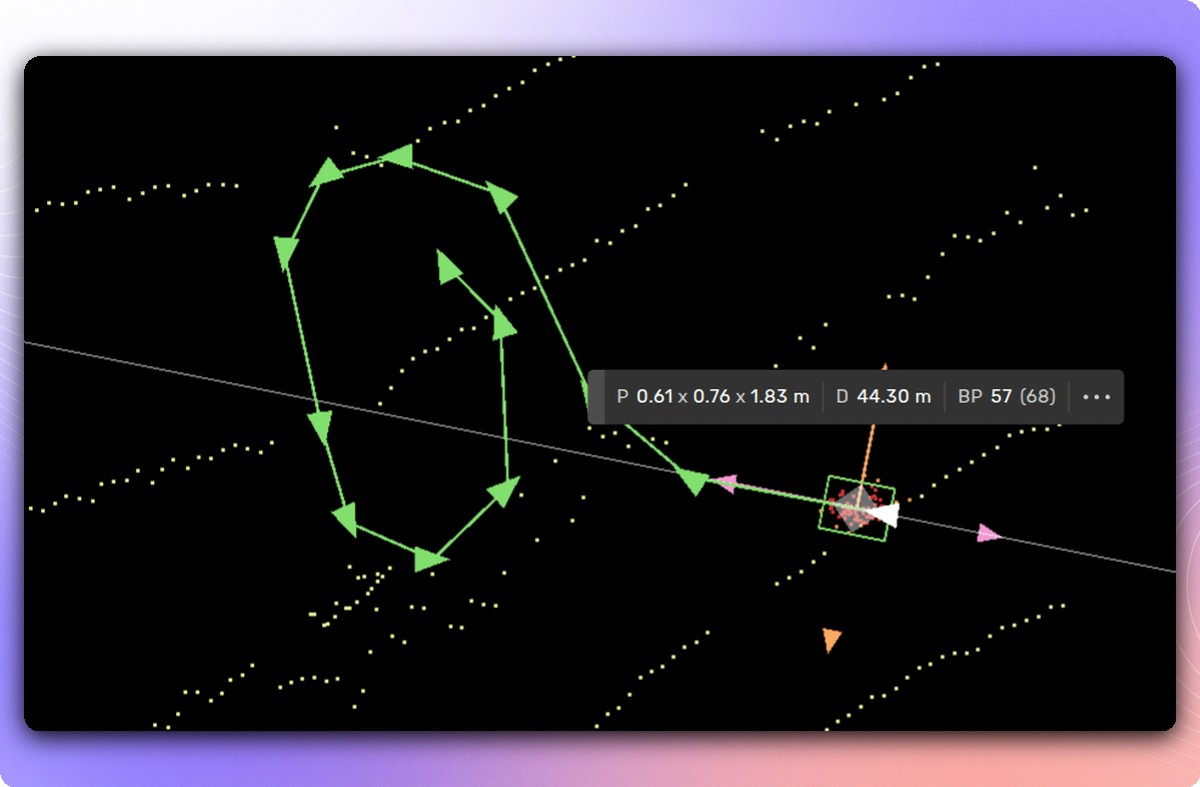

We are adding Language Grounding to the Kognic platform — the annotation workflows required for next-generation driving models. This is not a separate product. It is an expansion of what our platform does, built on the same foundation our customers already rely on for production annotation.

Language Grounding is the practice of annotating driving scenes with natural language descriptions that explain why a vehicle should act, not just what is present. It connects observations to causal reasoning, giving autonomous driving models the structured text data they need to learn decision-making rather than pattern matching. Kognic's Language Grounding capability supports three annotation modes: writing original reasoning traces, editing model-generated text proposals, and ranking multiple outputs for preference learning.

Key Takeaways (paste after definition box)

- Language Grounding teaches autonomous driving models why to act, not just what is in the scene, by annotating driving data with structured causal reasoning in natural language.

- Kognic's platform supports three annotation modes for reasoning data: Write (original descriptions), Edit (refining AI-generated text), and Rank (comparative quality assessment for preference learning).

- The Chain of Causation methodology prevents hindsight bias by limiting annotators to information available at the decision point before revealing the full sequence.

- Structured reasoning data has demonstrated a 12% improvement in planning accuracy and a 35% reduction in close encounters in published research.

- This capability supports the industry shift from modular perception systems to end-to-end driving models that need to learn causal reasoning, not just object detection.

How Does Language Grounding Annotation Work?

Language Grounding adds three annotation modes to the Kognic platform:

Write — Annotators create textual scene descriptions and reasoning traces from scratch. Not vague summaries, but structured explanations of what is happening and why it matters for the driving decision.

Edit -- Model-generated text proposals are refined by human domain experts. Language models are fast but imprecise. Human editors catch the mistakes that matter for safety.

Rank -- Multiple model outputs are compared and ranked by quality. This is preference learning for physical AI -- the equivalent of RLHF, but for driving behavior instead of chatbot responses.

Alongside these modes, we are introducing the Chain of Causation workflow — our methodology for producing causally grounded reasoning data.

What Is Chain of Causation and Why Does It Matter?

The Chain of Causation workflow is designed to solve a specific problem: hindsight bias in reasoning annotation.

Here is the issue. If you show an annotator the full video — including what happens after a driving decision — they will unconsciously incorporate future information into their explanation. The reasoning looks correct but is causally broken. A model trained on that data learns correlations, not causes.

Our workflow prevents this by design. In step one, the annotator sees the scene only at the decision point. No future context. They identify what matters and what the vehicle should do based solely on available information. In step two, the full sequence is unlocked for quality assurance. The reasoning trace is verified against what actually happened.

This two-step approach produces annotation data that is causally grounded — each observation leads logically to the decision, and no future information leaks into the reasoning chain.

This two-step approach produces annotation data that is causally grounded -- each observation leads logically to the decision, and no future information leaks into the reasoning chain.

What Results Does Structured Reasoning Data Produce?

Published research on structured causal reasoning for autonomous driving demonstrates a 12% improvement in planning accuracy on challenging scenarios and a 35% reduction in close encounter rates in simulation. Reinforcement learning on reasoning quality improved consistency by 37%.

These numbers validate something we have believed for a long time: the quality of reasoning annotation directly determines the quality of driving decisions. Vague descriptions like "be cautious" and superficial observations like "it is sunny" do not help models learn to drive. Structured causal chains do.

Why Is the Industry Moving from Perception to Reasoning?

The industry is moving from correlation to causation. From labeling what is present to explaining why it matters. This is harder work — and more valuable work. It requires domain expertise, rigorous methodology, and tools built for the task.

We have spent seven years building exactly that. Language Grounding is the next step.

If your team is exploring end-to-end architectures, vision-language models, or reasoning-capable driving systems, get in touch. We would like to understand your program and show you what this looks like in practice.

FAQ Section

Q: What is Language Grounding in autonomous driving?

Language Grounding is the process of annotating driving scenes with natural language to explain causal reasoning behind driving decisions. Instead of labeling what objects are in a scene, annotators describe why a vehicle should brake, yield, or change lanes. This gives end-to-end driving models structured reasoning data they can learn from.

Q: How is Language Grounding different from traditional annotation?

Traditional perception annotation labels objects with bounding boxes, segmentation masks, and trajectory data. Language Grounding adds a layer of causal explanation on top of perception. Annotators write, edit, or rank text descriptions that connect what a driver observes to the decision they should make and why.

Q: What are the three Language Grounding annotation modes?

Kognic supports three modes. Write mode: annotators create original reasoning descriptions from scratch. Edit mode: human experts refine text generated by AI models, catching safety-critical errors automated systems miss. Rank mode: annotators compare multiple model outputs to enable preference-based learning similar to RLHF.

Q: What is Chain of Causation in driving annotation?

Chain of Causation is a methodology that prevents hindsight bias in reasoning annotation. Annotators first see only the information available up to the decision point, write their causal reasoning, and only then see the full sequence to verify. This stops future knowledge from contaminating the causal chain.

Q: Does Language Grounding improve autonomous driving performance?

Research using structured causal reasoning methodology shows a 12% improvement in planning accuracy on challenging scenarios, a 35% reduction in close encounter rates, and 37% better consistency through reinforcement learning on reasoning quality.

Q: Who performs Language Grounding annotation?

Language Grounding requires domain experts with deep knowledge of traffic dynamics, vehicle physics, and driving judgment. Unlike basic perception labeling, this work cannot be effectively crowdsourced because annotators must understand why driving decisions happen, not just identify objects in a scene.

Share this

Written by

.jpg?width=2000&height=500&name=kognic-language-grounding-banner%20(4).jpg)