Deploying interactive machine learning

Kognic makes use of machine learning to annotate data faster with higher quality. It is always a challenge to deploy machine learning tools in a real production environment, especially when it is used interactively and on-demand by the platform’s users. Welcome to read about how we deploy fast and interactive machine learning services that can scale up to thousands of users!

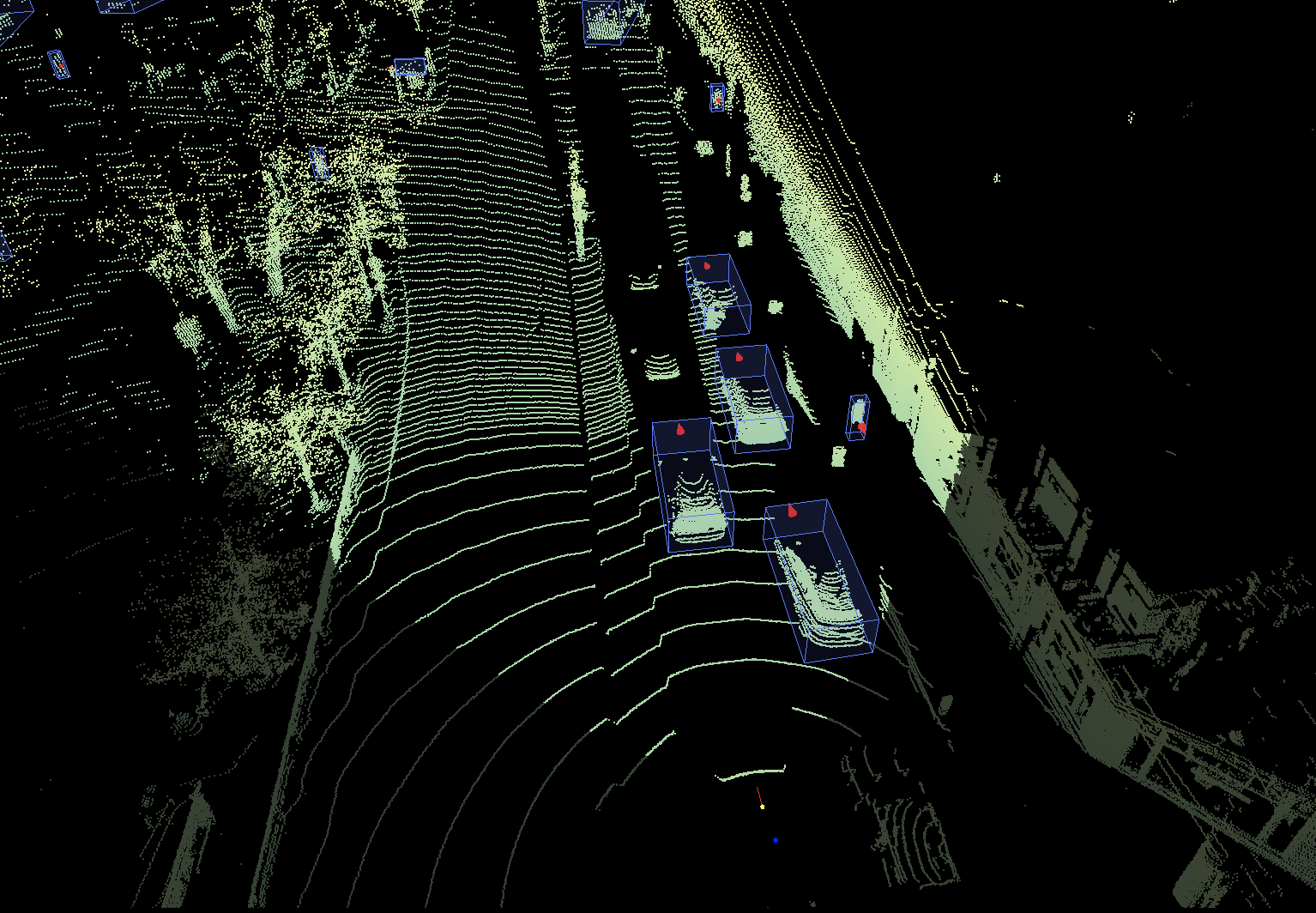

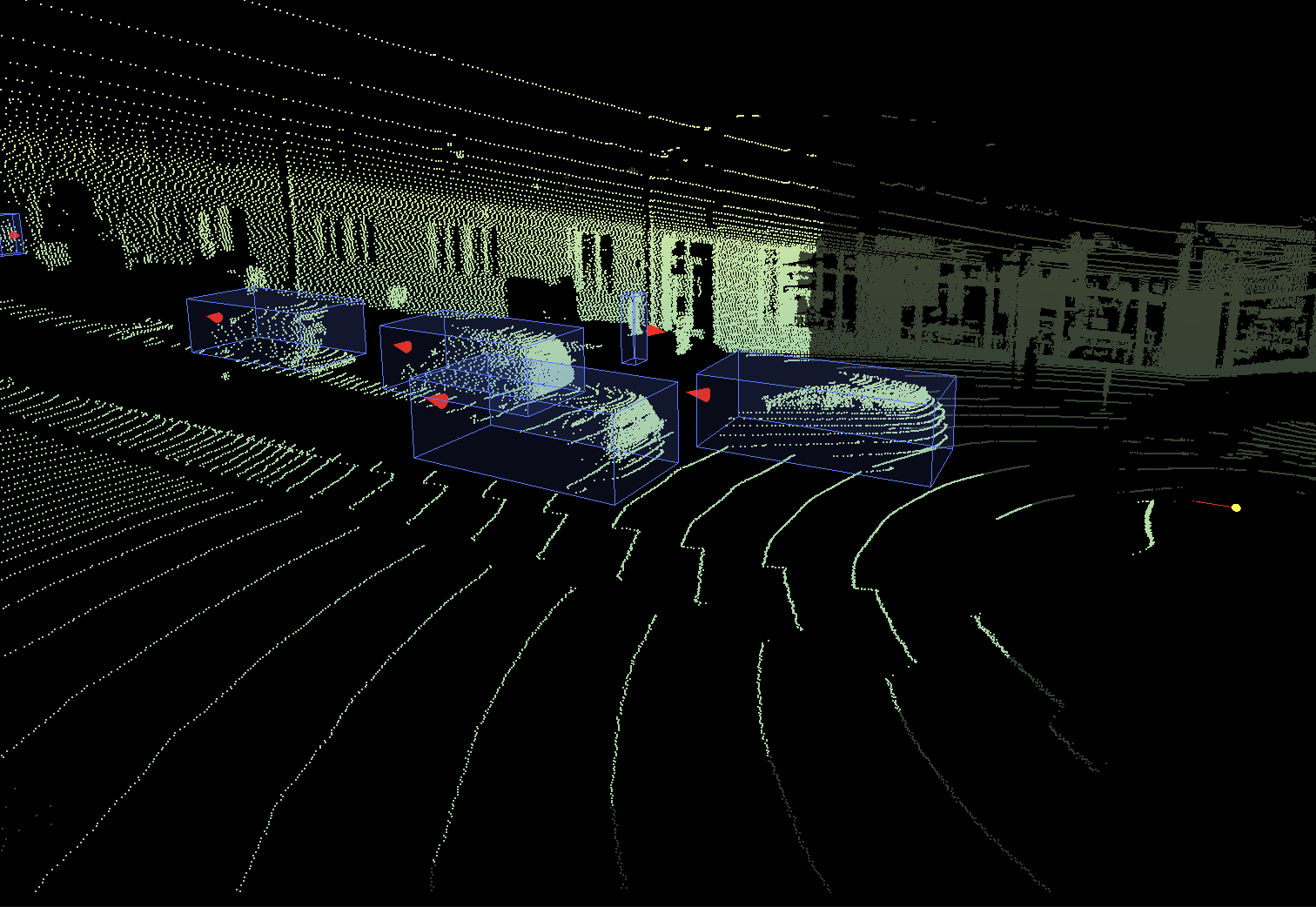

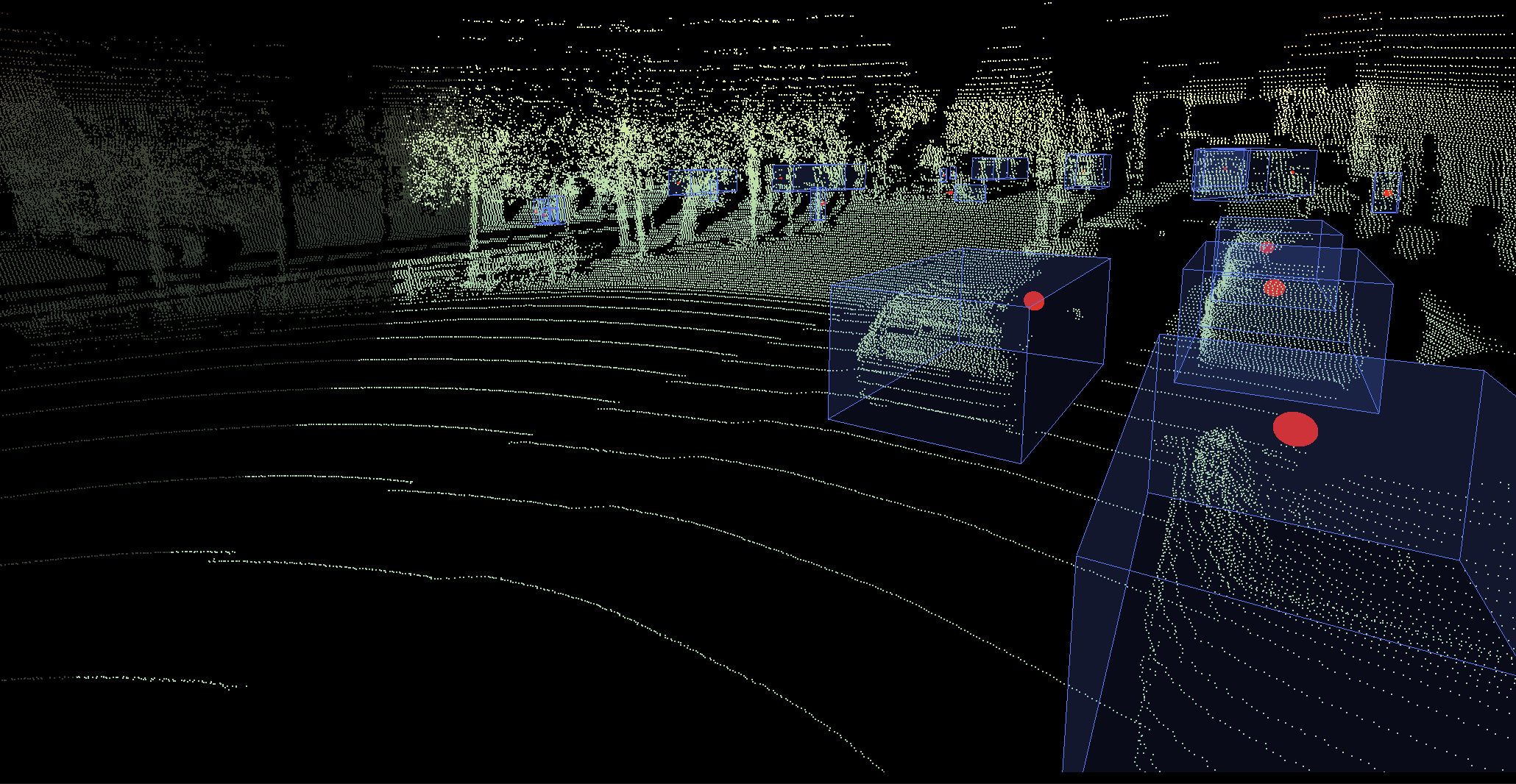

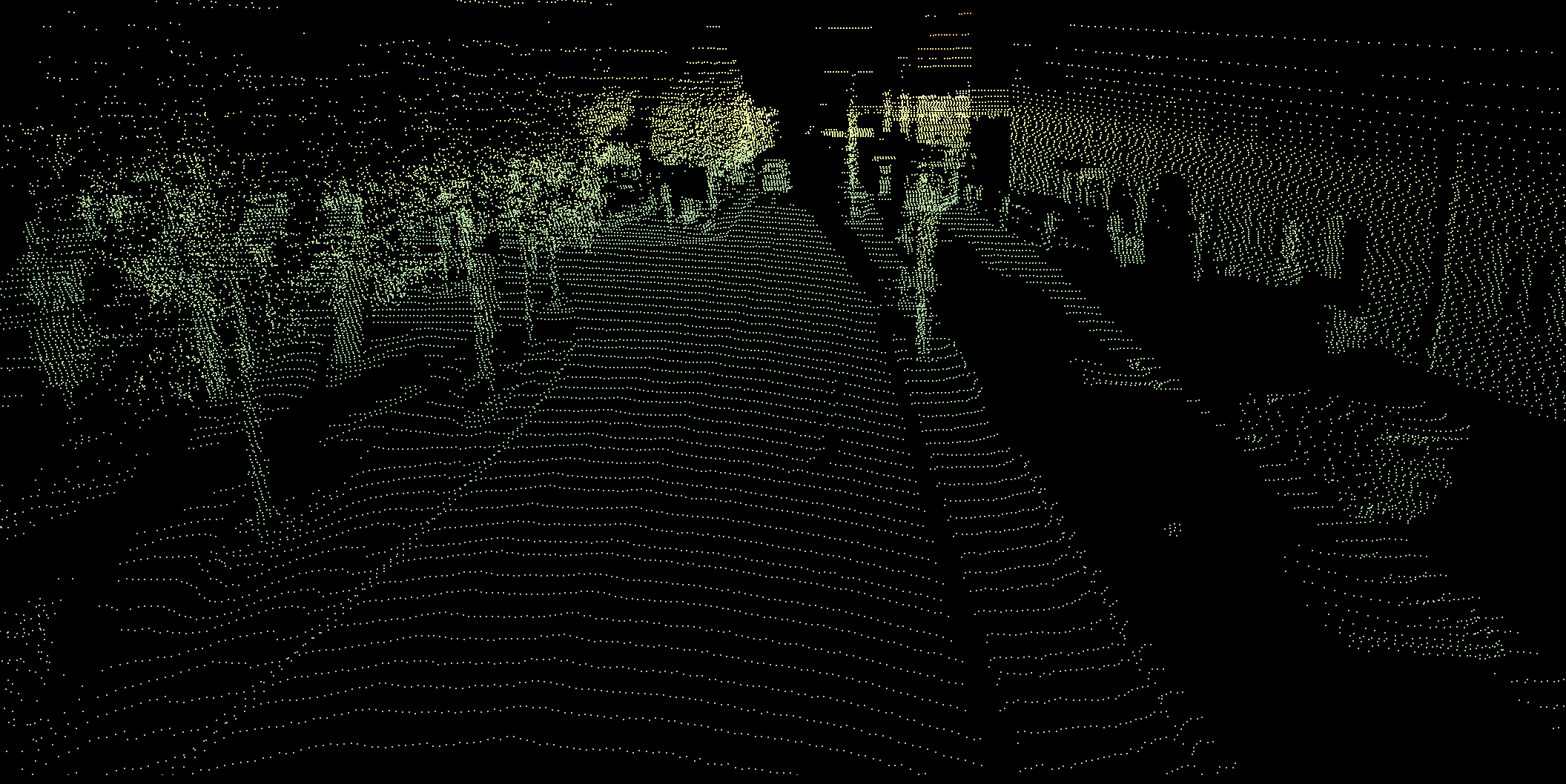

Kognic offers an analytics and annotation platform, where we, among other things, provide high-quality annotations of data for perceptions systems in autonomous vehicles. Annotating data for self-driving cars often consists of marking objects in 2D images and 3D point clouds generated by LiDAR sensors. A LiDAR (which stands for Light Detection and Ranging) is a remote sensing method that uses light in the form of a pulsed laser to measure ranges, so each point in the point cloud is one detection of a surface in the real world.

To manually annotate objects in a point cloud, human annotators draw bounding boxes in 3D and adjust all dimensions to fit the points belonging to an object, often a vehicle or pedestrian. When the annotators are working on a video, they do this for every single frame in the video sequence. Each specific object is also assigned a unique id that has to be persistent throughout the whole sequence. As you can imagine, the process of manual annotating is very time-consuming.

To speed up the annotation process, we use machine learning in the workflow. More specifically for this use case, our goal is to provide the annotator with a set of software applications that use modern machine learning to suggest bounding boxes in the point cloud. This would empower the human annotator, moving focus from manually creating boxes to instead reviewing and correcting the automatically generated ones. The main goal is to make the annotation process involve fewer steps, which will make it faster.

"...our goal is to provide the annotator with a set of software applications that use modern machine learning to suggest bounding boxes in the point cloud."

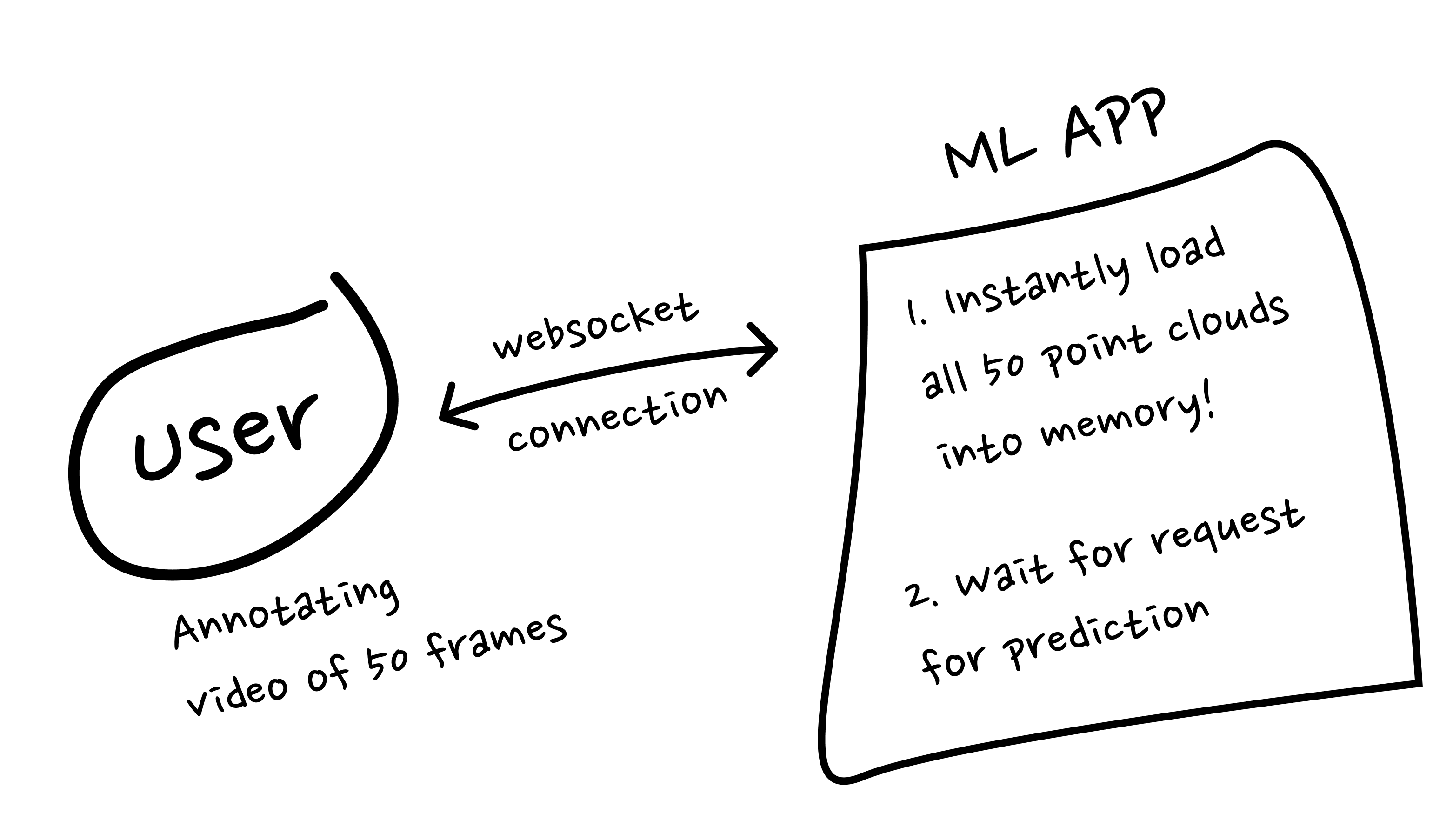

So how did we actually design our new application? We went for an interactive solution, where the annotator indicates a region containing an object; for example, a vehicle and a neural network will then predict a bounding box. According to the well-established Doherty Threshold, the response time should be no more than 400ms for a real-time feature to feel responsive. We chose to use websocket connections between each client and the machine learning server to handle this constraint. This enabled us to keep a state of each connection and download all necessary data to have it readily at hand. A request for a bounding box prediction would then only take as long as the inference time of running the deep neural network model, without having to download any data on demand.

This design made the application quick and responsive and it worked well for a few users at a time. We asked ourselves “That’s cool, but is it scalable?” - and so our journey began… We needed to scale our solution to hundreds, or even thousands, of annotators. We carried out a load test and discovered that the application was not reliable for a larger number of users.

When a client connects to our application via a websocket connection, the application will instantly start downloading all point clouds that the client is working on. This causes high load, and if many users connect at once, it results in timeout errors. We had no way of gracefully disconnecting client connections and route them to an instance with less load. The task to assign users to new instances of the application was also difficult since the load was unevenly distributed over time, peaking when a new user connected which started the download of new point cloud files.

"That’s cool, but is it scalable?” - and so our journey began…"

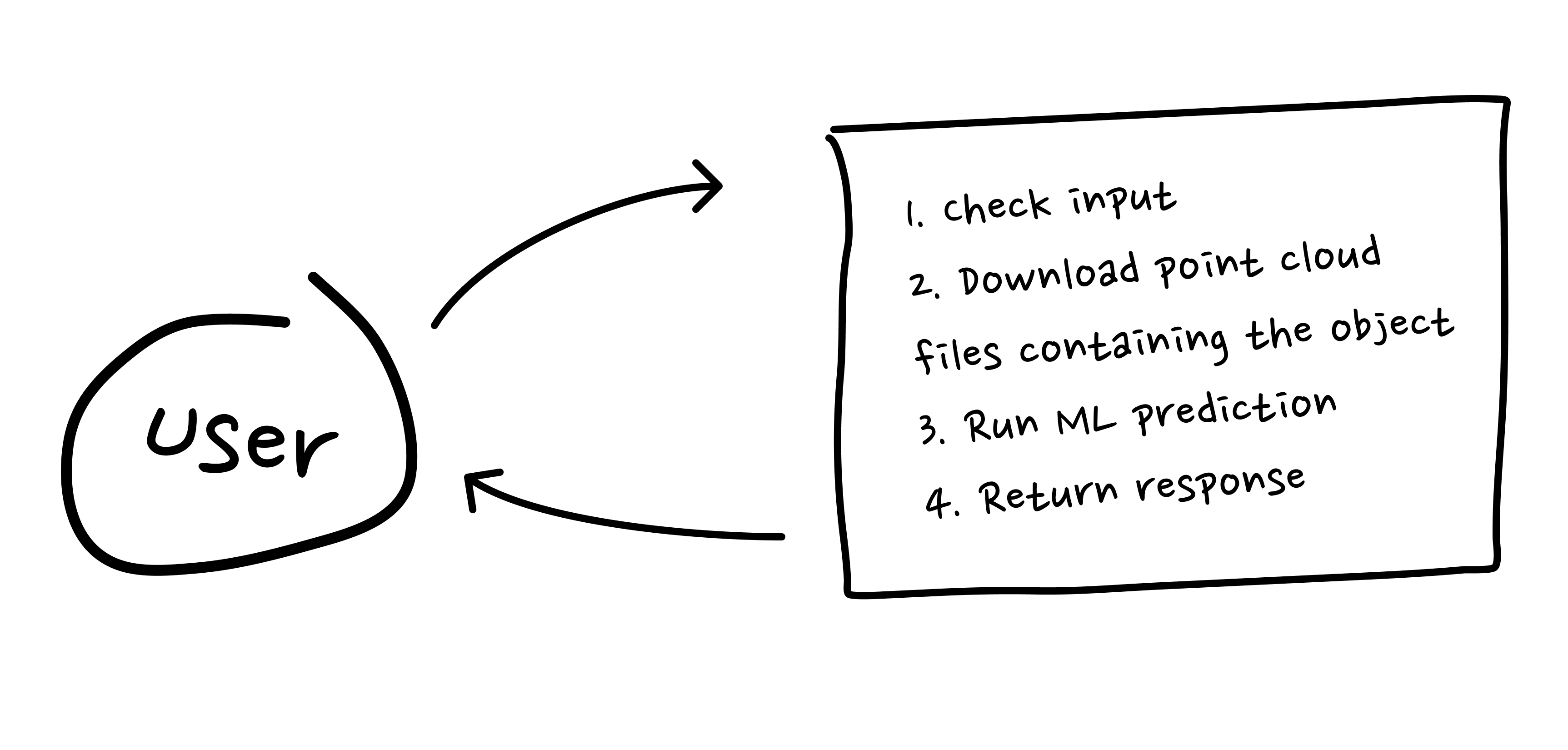

Instead of trying to solve the complex problem of having persistent connections and at the same time also having a hard constraint on how large instances we could use, we decided to investigate other options. What if we switched to a stateless approach? The idea would be to create a service that doesn't need to keep track of which point clouds you will be working on in the future, or what you have done in the past. Ideally, it would be enough to ask the service for a prediction together with the file path to the point cloud of interest. The service can download the necessary data, perform the prediction, and then happily move on to serve the next request without considering any past or future. That would solve the problem with the persistent connections and routing clients between containers, but we had to download files on demand for every request, which might be too slow.

To optimize the time needed for downloading point clouds we decided to utilize their underlying file structure. The point cloud representation is a file structure where the space (spanned by the points) is divided into octants. The main reason for storing point clouds in this hierarchical structure is for a user interface to stepwise render the point cloud with different resolutions. Every octant is a node that contains eight more child nodes and so on, adding layers of points to increase the resolution in specific regions. We were already parsing and transforming points from each file to a global coordinate system to build a complete point cloud, so we had the knowledge of how to find all files containing the region of interest. This enabled us to download only a handful of all the files in a point cloud to retrieve points belonging to a vehicle. Suddenly we were down at 300-400 ms for downloading files, approaching our total response time deadline of 400ms fast.

"We always deploy our machine learning algorithms on CPU instances to be able to serve them at a reasonable cost."

To further decrease our response time, we deployed the application with aiohttp, an asynchronous web framework. This gave us the benefit of allowing the application to not sit idle and wait while downloading files or performing predictions. We always deploy our machine learning algorithms on CPU instances to be able to serve them at a reasonable cost.

Now, we could finally start working on actually making the application scalable and useful in production. There is a lot of infrastructure needed when developing and deploying new applications. We started by internally discussing different errors that could occur in the application and how to handle them. In order to keep track of the performance, we configured a collection of metrics, most importantly inference times and download times, which are being monitored and alerted on.

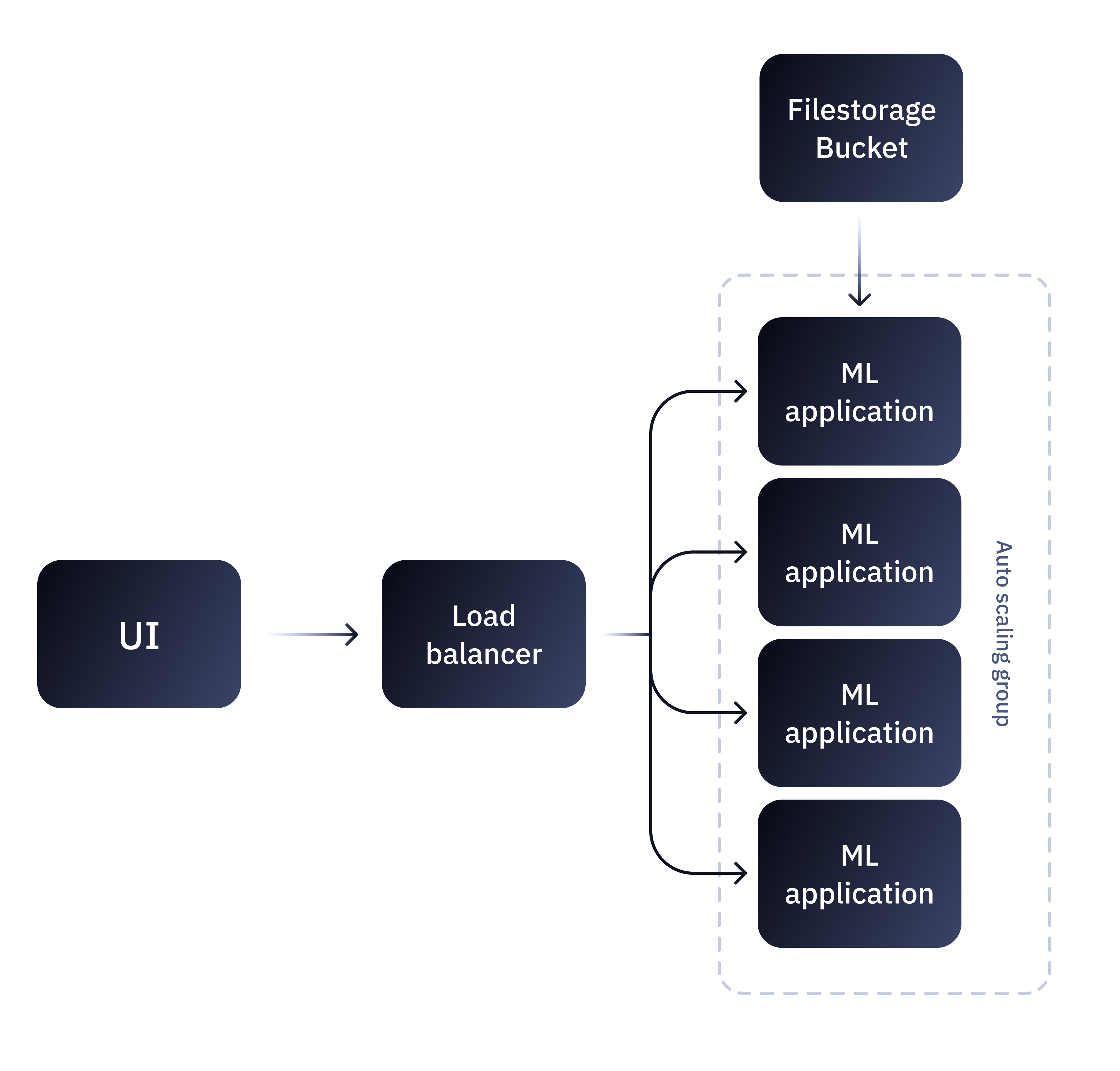

The issue that started this whole adventure, how to scale up our instances in a reliable way, was now ridiculously easy. We did some load tests to investigate the CPU and memory utilization, and then implemented a horizontal scaling policy that was triggered when the running instances reached a significant CPU load.

Final thoughts

The stateless approach has saved us time, effort, and resources since we can easily scale horizontally in production and we don’t need to consider which instances the clients should connect to. There is always a trade-off between scaling vertically and horizontally. If money were never a problem, scaling vertically is almost always easier. Do you need more memory or computation power? Go get it! Run it on a better machine! At some point this becomes unreasonable, and then you have to rethink the architecture of the solution, in our case by creating stateless services that are easy to scale horizontally.

This adventure of scalable machine learning applications will continue with developing new features to make the application even better. Stateless is good, but our use case would benefit from keeping track of the clients’ activities. Usually, one point cloud contains multiple objects that will be annotated, and with the current stateless solution, parts of the same point cloud will be downloaded multiple times. It seems that different design solutions will always have some drawbacks, where on one hand we don’t benefit from being completely stateless but on the other hand involving states makes scaling tricky.

Also, having a human in the loop puts a lot of demand on our applications, especially when it comes to responsiveness and user-friendliness. As we roll out the applications to more and more annotators, we get feedback from them which we are thankful for. Response time and different requirements from the users will make sure we develop these applications in new directions in the future.

At Kognic we are always looking out for new state of the art machine learning techniques that we can use in our annotation platform to annotate data faster with even higher quality. For our next project, we have learned to:

- Consider if the new machine learning service needs to be interactively used by the annotators, and if so,

- Early identify possible bottlenecks that might be slow in the machine learning application,

- Make sure to utilize the human in the loop as much as possible. It is an amazing source of ground truth to be able to get instant feedback on the predictions, or let the annotator indicate regions of interest, or have knowledge of both the future and the past in a video sequence.

Good luck with your next machine learning project and happy coding!

Share this

Written by